How Nature Builds Computers

23/04/2013

At a basic level life could be seen as any self-replicating system. A physical system in the universe which manages to remain stable for long enough that it can use the resources around it to create replicates. We stack many other qualities for life too, but self-replication is the ultimate requirement. Otherwise it is nothing more than a chemical happenstance in the vast universe.

At first this self-replicating biology may have happened by chance, like some form of chemical chain reaction or equilibrium. Systems with a miniscule lifetime, and no real ability to fight against entropy and change. Complicated behavior is required if these systems are to last more than a few seconds. Movement and feeding, some protection against the elements of the outside world.

Fighting against the weathering and unpredictability of the environment is the biggest challenge to life. On our blue planet evolution has stepped up to this challenge. It has created life that is incredible and diverse. There are many lessons to learn from it. Not just lessons in creating stable systems, but creating beautiful ones too. The scorpion is one of my favorites.

When we create computer programs meant to be left to their own devices, we are in a sense creating these autonomous systems, much like animals operating in a simple environment. Can we analyze Mother Nature to see how she manages to adapt so well to so many complex and unpredictable environments?

One approach is to take conservative action, observe the environment carefully, and only act when there are predictable outcomes. Another is to use formal verification or powerful simulation to prove that no components of the system can fail in any situation. But I am talking about something more fundamental and devoid of these high level mathematical and logical concepts.

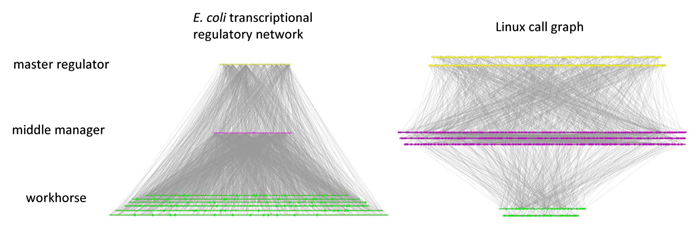

A radical idea comes from scientists, which in 2010 compared the functionality of an E. Coli bacteria to that of the Linux Kernel. They compared the "call graph" of the systems.

In an E. Coli there are a few basic functions that can be performed at the very top level. These are the overall states, or behaviors, of the bacteria. These all use some middle level systems and controllers, which then use a very large number of different basic physical functions for creating proteins and enacting biological needs.

The E. Coli has a few simple goals in life. A few simple high level things it tries to achieve. But in trying to achieve these there are a large number of logical and physical processes going on at the low level. This muddle usually does what is required by the high level goals - but the method is convoluted and somewhat imprecise. It is no design any sensible person would use.

The Linux kernel is the opposite. At the top level is a number of user programs that perform all the tasks needed by a modern computer. They call on many different mid-level controllers which manage the major systems such as networking, audio and video. These finally call a small set of important functions which run the core of the machine. The Linux kernel is imprecise and fuzzy at the high level - while well defined and simple the closer it gets to the machinery.

In this way the systems are somewhat reflected vertically. The E. Coli resembling a pyramid and the Linux Kernel representing an upside down pyramid.

Their reliability is also mirrored. In the Linux kernel it is most important that the core functionality at the bottom always performs correctly and can be relied on. Otherwise all the other systems break. At the middle level it is still important that everything functions, but one thing breaking does not make the system completely dysfunctional. At the top programs often crash. While annoying there are usually other ways to achieve the same task.

In the bacteria evolution has rendered the bottom layer somewhat unpredictable. Some of the basic functions don't work, some only work occasionally and some do something only similar to what was intended. The middle layer which manages behavior is also unpredictable, but will tend toward the correct behavior. The top layer is most important for a correctly functioning organism.

The reason this backward design is so successful in nature is due to mutation. Mutation always happens at the bottom most level - the genetic machinery - the DNA. If a fundamental component were to change at the bottom level in the Linux kernel the whole system would be broken. This isn't true in the bacteria. A break in one bottom level piece of machinery doesn't make much difference. There are so many other routes to perform behavior that it will probably still function the same.

These design schemes are reflected in both all of nature and computer science.

In computer science we love perfect abstractions. Those components we can use without knowing the internals, and that we can use without them ever unexpectedly failing. The computer in my mobile phone has been clicking over for five years and never got an addition wrong. Another example is the internet. One can use it without any idea of how it works. It is vast and complicated, but (almost) always just works.

In nature things are softer. Animals tend to have a few simple behaviors. But the systems which carry those behaviors out are huge and complex with many flaws and faults. They have simple, unreliable ways of overcoming those issues. Somehow it almost always works out in the end.

To the guided hand of a watchmaker the upside down design of an E. Coli is abhorrent. The complexity is intractable and chaotic. It is the design of a Jackson Pollock madman.

But Mother Nature is no mere watch maker. Evolution laughs at a system that has run for just five years. There is another ledge to climb onto in system design. Eventually programmers must assign her role. The computers will have to start doing the designing for us. And they will do a horrible job of it. But somehow it will feel more familiar. They will have the quirks of the flora and fauna we love. Somehow those systems will start to feel alive.

_________

Sources